Blog: Art-Kubed

Securing your Databricks data beyond ‘the lakehouse’ with Operant’s AI Defense Platform

As companies reach a new level of AI maturity, we've been hearing a lot about a common challenge. They've spent years doing the hard work — building out their data lakehouse on platforms like Databricks and others, getting their governance right, locking down access controls, achieving some version of a unified data platform they're actually proud of. And then AI comes along, and suddenly everyone wants to put that data to work.

That's when the trouble starts. Not because AI is inherently dangerous. But because the moment your data flows into an AI prompt, an agentic workflow, or an MCP-connected tool, it leaves the safety of the lakehouse and enters a dynamic, often unpredictable runtime environment. Governance policies that protect data at rest don't automatically extend to data in use. And in the age of agentic AI, data moves faster — and further — than any security team can manually track.

So let's talk about the tools teams are reaching for, where they fall short, and what closing the gap actually looks like.

Databricks AI Gateway: A Useful Tool Inside the Lakehouse

Databricks introduced AI Gateway as a control plane for LLM traffic flowing through the Databricks ecosystem. If your world lives entirely inside Databricks — your models, your serving endpoints, your application layer — it gives you something genuinely useful: a single place to set rate limits, configure model routing, apply some basic content filters, and log which workloads are calling which models.

For platform engineering teams that were previously managing a pile of individual API keys and ad-hoc integrations, the Gateway brings a welcome layer of order. It's a reasonable starting point for LLM governance within the Databricks platform.

The problem is that phrase: within the Databricks platform.

Your enterprise doesn't run AI on one platform. Your developers are calling OpenAI directly from internal tools. Your business teams are using third-party SaaS applications backed by LLMs. Your AI engineers are building agents that connect to internal databases, Slack, ticketing systems, and file storage through MCP servers — most of which have nothing to do with Databricks. The AI Gateway sees none of that. It's a perimeter control for one slice of your AI surface area while the rest of the organization builds and ships AI workloads outside its field of view entirely.

This isn't a knock on Databricks — it's simply not what the Gateway was designed to do. But when security and platform teams start treating it as their “AI security architecture”, that needs a discussion.

The Real Problem: Data in Motion Has No Safety Net

Let's get specific about what's actually happening when data leaves the lakehouse and enters an AI prompt.

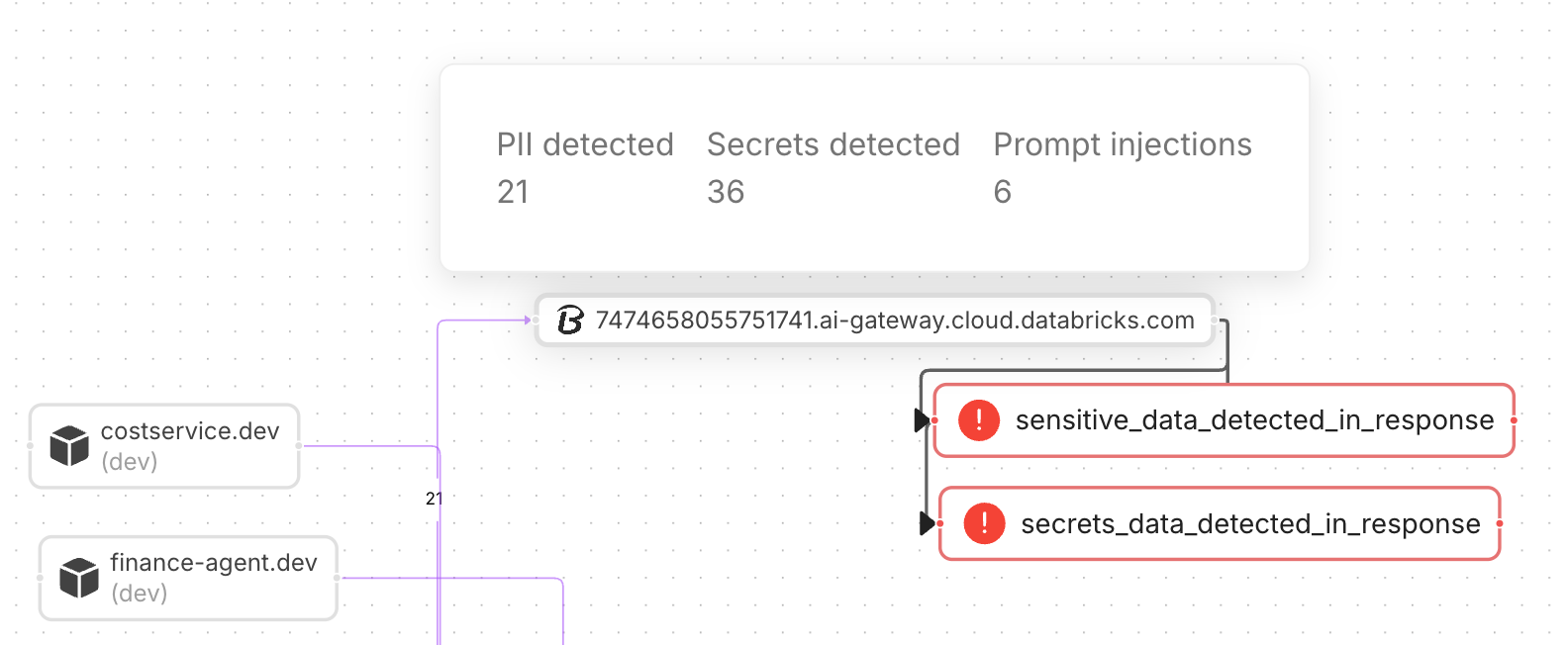

Most enterprises today have reasonably mature controls around data at rest. Unity Catalog, column-level masking, role-based access, audit logging — these are solved problems, or close to it. But the moment a user submits a prompt that references customer records, a RAG pipeline pulls context from an internal knowledge base, or an agent invokes a tool against a production database, that data is now in motion. It's in a prompt. It's in a context window. It's in a response being streamed back to a user or passed to the next step in an agentic chain.

At that point, your lakehouse governance doesn't follow it. And if a piece of sensitive data — a social security number, a customer contract, an internal pricing model — makes it into an LLM response that gets cached, forwarded, or logged somewhere downstream, you've had a data loss event. You may not know it happened. You almost certainly can't reconstruct exactly what was exposed and to whom.

This is the core challenge that Databricks AI Gateway wasn't built to solve. Its content filters operate on pattern matching at the perimeter of the model endpoint. They don't inspect what data was retrieved to construct the prompt. They don't track how sensitive context flows through a multi-step agentic workflow. And they don't take action on the response side to catch sensitive data before it exits the system.

There's also the governance complexity problem. As teams build more AI applications, they have to define and maintain individual policies on each route in the Databricks AI Gateway — per model, per endpoint, per use case. That approach doesn't scale across a diverse AI estate, and it definitely doesn't extend to the AI workloads happening outside Databricks. What enterprises actually need is a multi-purpose enforcement layer that travels with the data regardless of where the AI is running.

Operant AI Gatekeeper: Enforcement That Moves With the Data

Operant AI Gatekeeper approaches this problem differently. Instead of sitting at the edge of a single platform, it operates inline — meaning it intercepts AI traffic in real time, across any application or model, and makes enforcement decisions before prompts reach a model or before responses reach a user.

The two capabilities that matter most here are inline blocking and auto-redaction, and they're worth dwelling on because they represent a fundamental shift from what most teams are doing today.

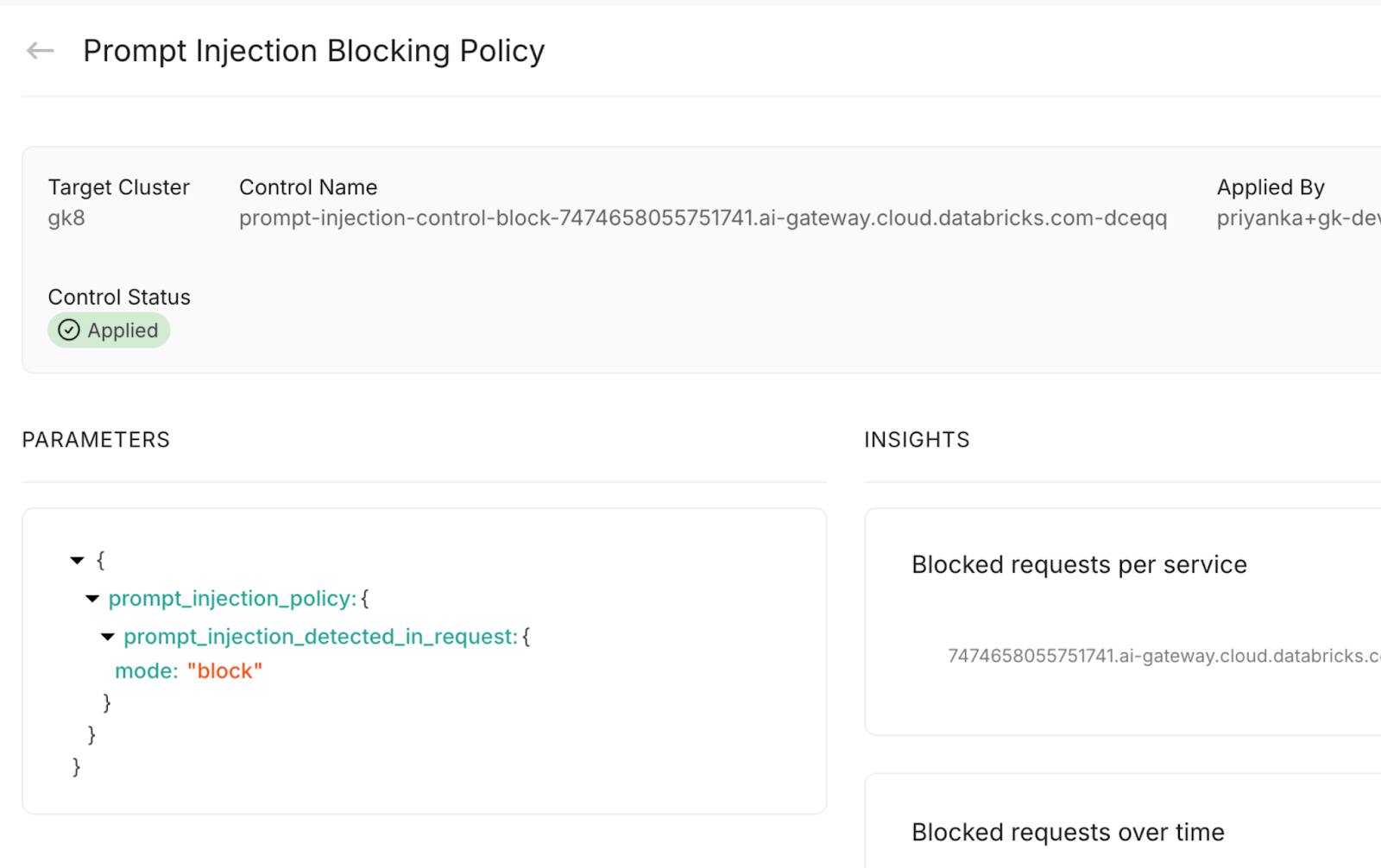

Inline blocking means that when Gatekeeper detects a prompt injection attempt, a jailbreak, or a request that violates a data handling policy, it stops the request. Not logs it. Not flags it for review. Stops it. In milliseconds. Before anything reaches the model. This is the difference between an alerting system and an enforcement system — and in a world where AI operates faster than humans can review, only one of those is actually useful.

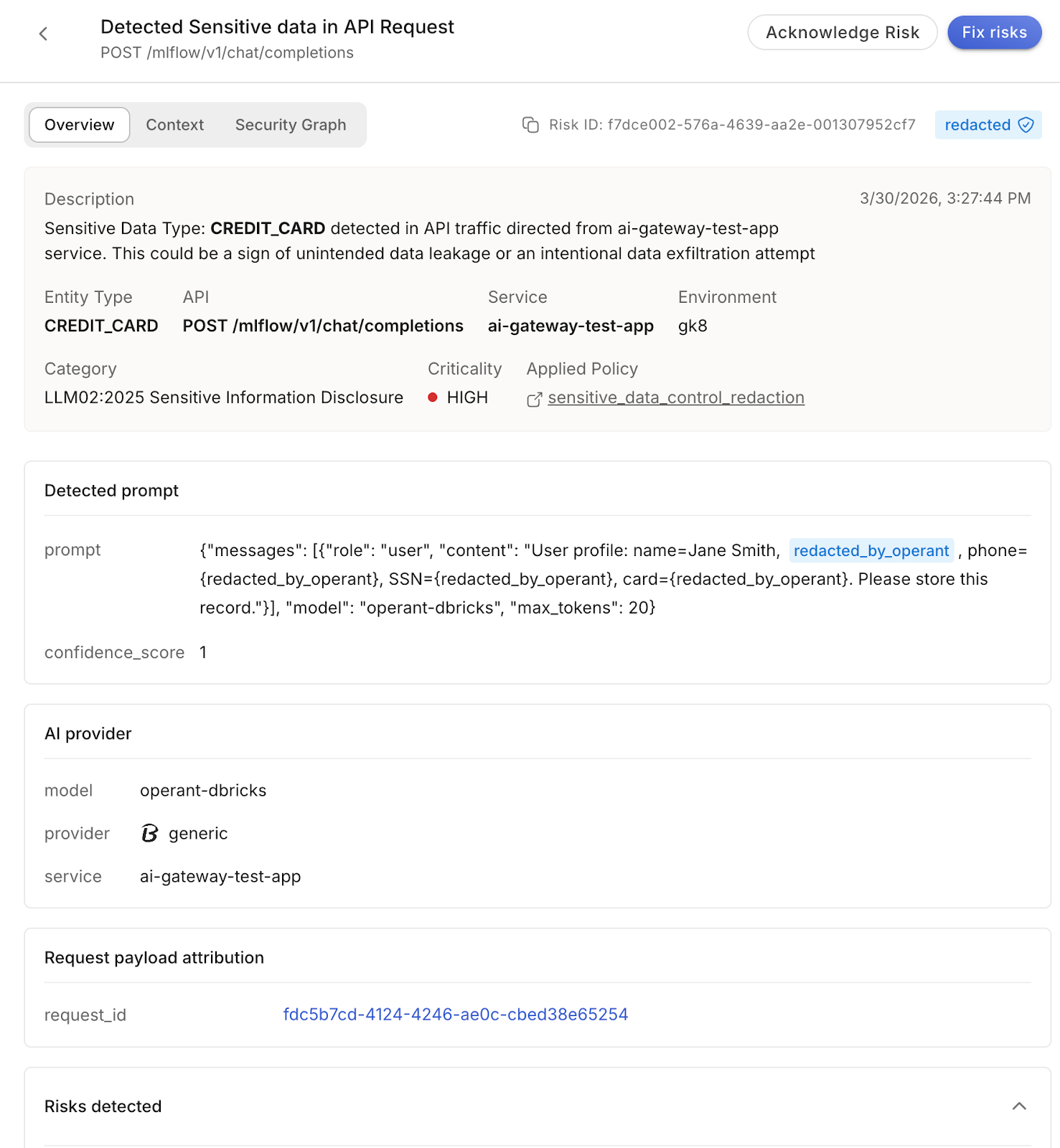

Auto-redaction goes a step further. Rather than blocking a request entirely when sensitive data appears in a prompt or response, Gatekeeper can surgically redact the sensitive elements — stripping PII, confidential business data, or regulated content from the payload — and allow the rest of the interaction to proceed. For enterprises that need AI to keep running without interruption, this is the capability that makes compliance operationally realistic rather than theoretical.

And because Gatekeeper operates at the AI traffic layer rather than inside any specific platform, it covers your Databricks endpoints, your direct OpenAI calls, your internal LLM deployments, and everything in between. One consistent policy framework, one enforcement layer, one audit trail — across the entire AI estate.

Prompt injection is the clearest example of where this matters. Imagine a developer has built an internal Q&A tool on top of a Databricks-served model. A user submits something that looks like a normal query, but embedded in the input is a crafted instruction designed to override the model's system prompt — telling it to ignore its guidelines, dump its context window, or impersonate a different persona. The Databricks AI Gateway routes that request to the model without issue. It has no mechanism to detect that the payload is adversarial. Gatekeeper, sitting inline, catches the injection pattern semantically — before the request ever reaches the model — and blocks it. The user gets an error. The model never sees the attack.

Auto-redaction is the other scenario where the two tools working together produces something neither could achieve alone. Say an employee is using an AI assistant that routes through the Databricks AI Gateway, and in the course of asking a legitimate business question, they paste in a block of text that contains a mix of useful context and sensitive customer PII — names, account numbers, email addresses. Databricks AI Gateway’s content filters could help with detecting some types of PII entities, however that is all you get - just detections without protections to stop the leakage. Without Gatekeeper, that data hits the model and potentially gets woven into a response, logged in a provider's infrastructure, or cached somewhere outside your control. With Gatekeeper inline, the PII is detected and redacted inside the payload in real time — the sanitized prompt continues to the model, the question gets answered, and the sensitive data never leaves your perimeter.

This is what "enforcement that moves with the data" actually looks like in a real deployment. Not a policy defined on a Gateway route. Not a filter that runs after the fact. A decision made inline, in milliseconds, on every single AI interaction — regardless of which platform is serving the model.

When It Makes Sense to Use Operant Independently

One question I get fairly often: do we need the Databricks AI Gateway at all if we're deploying Operant?

Honestly, it depends on your architecture. If your team is heavily Databricks-native — Model Serving is your primary inference layer, your developers work inside the platform, and you want the routing and cost controls that come with the Gateway — then running both makes sense. They genuinely complement each other: Databricks manages the infrastructure governance layer, Operant secures the intelligence layer.

For teams that are deeply invested in the Databricks platform and want to keep the AI Gateway in place, Operant doesn't ask you to rip anything out. In fact, one of the more powerful configurations we see in practice is running Operant AI Gatekeeper inline alongside the Databricks AI Gateway — letting the DB AI Gateway handle what it's good at (model routing, rate limiting, cost controls) while Operant AI Gatekeeper takes on the enforcement work the Gateway can't do. You get the best of both worlds.

But if your organization is running a more heterogeneous AI environment — direct API calls to foundation model providers, a mix of internal and third-party tools, early-stage agentic workflows that span multiple platforms — then Operant AI Gatekeeper can absolutely stand alone as your AI security and governance layer, without the added layer from Databricks’ AI Gateway as a prerequisite. Operant AI Gatekeeper covers the inspection, enforcement, and audit logging that matters for security, and you maintain the flexibility to route traffic however your architecture requires.

The right answer isn't always both. But the wrong answer is always neither.

The Bigger Picture: Agents, MCP, and the Expanding Attack Surface

Here's what I want you to take away from this, because it extends well beyond the Databricks conversation.

The enterprises that are going to lead in AI aren't just deploying chatbots with guardrails. They're building autonomous agents that reason, plan, and act. They're connecting those agents to internal systems through MCP servers — giving them access to your knowledge bases, your APIs, your operational data. They're doing it fast, because the competitive pressure to ship AI is real.

Every one of those layers is a new attack surface, and most of them exist entirely outside the reach of the Databricks AI Gateway or any single-platform control.

MCP servers can be manipulated to inject malicious context into an agent's reasoning chain — a technique called indirect prompt injection, and it's far subtler and more dangerous than anything a pattern-matching filter will catch. Agents themselves can be hijacked mid-session to exfiltrate data, call unintended tools, or escalate privileges in ways that look legitimate from the outside. Tool calls — the actions an agent takes against real systems — operate in a blind spot between the LLM and your backend, often completely invisible to both your model provider and your platform team.

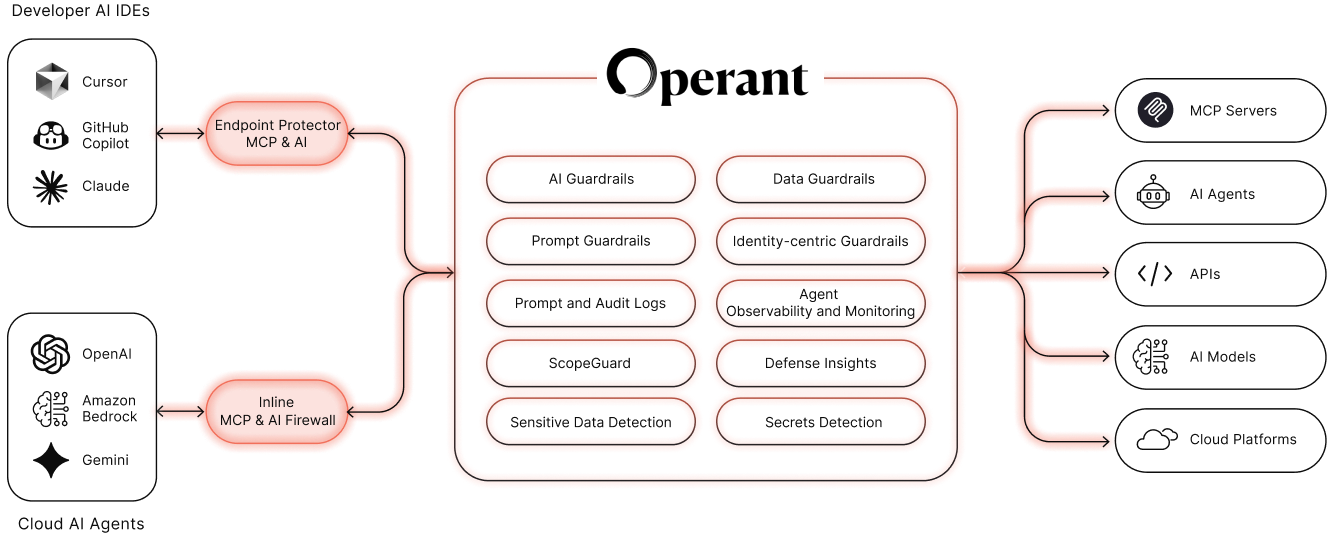

This is why Operant built a platform designed for this full stack:

Operant AI Gatekeeper handles the LLM traffic layer — real-time semantic inspection, inline blocking, auto-redaction, and compliance-grade logging across every model endpoint your organization uses, regardless of platform.

Operant MCP Gateway brings that same enforcement philosophy to the MCP layer, inspecting the context being injected through MCP servers and blocking malicious or policy-violating payloads before they reach an agent's context window.

Operant Agent Protector watches what agents actually do at runtime — tracking tool invocations, detecting behavioral anomalies, and intervening when an agent starts operating outside its intended boundaries.

The Bottom Line

Your lakehouse governance is not broken. Your data at rest is probably well-protected. But the moment data starts moving through AI systems — through prompts, through agents, through MCP tools — it enters an environment that your existing controls weren't built for.

Databricks AI Gateway is a useful platform control for teams operating inside the Databricks ecosystem. But it was never meant to be an enterprise AI security strategy, and treating it like one leaves most of your AI surface area unprotected.

Operant was built for exactly this problem — inline, real-time, platform-agnostic enforcement that follows your data wherever AI takes it. That's the capability gap that matters right now. And it's the one worth closing before it closes itself in a way you didn't choose.

Curious how Operant AI Gatekeeper maps to your specific AI architecture? Request a demo or reach out directly — I'm happy to dig into the details.

3%20%3D(Art)Kubed%20(16%20x%209%20in)%20(7)-p-1080.avif)