What Your "Enterprise" AI License Doesn't Actually Protect

.png)

Evaluate your spending

Imperdiet faucibus ornare quis mus lorem a amet. Pulvinar diam lacinia diam semper ac dignissim tellus dolor purus in nibh pellentesque. Nisl luctus amet in ut ultricies orci faucibus sed euismod suspendisse cum eu massa. Facilisis suspendisse at morbi ut faucibus eget lacus quam nulla vel vestibulum sit vehicula. Nisi nullam sit viverra vitae. Sed consequat semper leo enim nunc.

- Lorem ipsum dolor sit amet consectetur lacus scelerisque sem arcu

- Mauris aliquet faucibus iaculis dui vitae ullamco

- Posuere enim mi pharetra neque proin dic elementum purus

- Eget at suscipit et diam cum. Mi egestas curabitur diam elit

Lower energy costs

Lacus sit dui posuere bibendum aliquet tempus. Amet pellentesque augue non lacus. Arcu tempor lectus elit ullamcorper nunc. Proin euismod ac pellentesque nec id convallis pellentesque semper. Convallis curabitur quam scelerisque cursus pharetra. Nam duis sagittis interdum odio nulla interdum aliquam at. Et varius tempor risus facilisi auctor malesuada diam. Sit viverra enim maecenas mi. Id augue non proin lectus consectetur odio consequat id vestibulum. Ipsum amet neque id augue cras auctor velit eget. Quisque scelerisque sit elit iaculis a.

Have a plan for retirement

Amet pellentesque augue non lacus. Arcu tempor lectus elit ullamcorper nunc. Proin euismod ac pellentesque nec id convallis pellentesque semper. Convallis curabitur quam scelerisque cursus pharetra. Nam duis sagittis interdum odio nulla interdum aliquam at. Et varius tempor risus facilisi auctor malesuada diam. Sit viverra enim maecenas mi. Id augue non proin lectus consectetur odio consequat id vestibulum. Ipsum amet neque id augue cras auctor velit eget.

Plan vacations and meals ahead of time

Massa dui enim fermentum nunc purus viverra suspendisse risus tincidunt pulvinar a aliquam pharetra habitasse ullamcorper sed et egestas imperdiet nisi ultrices eget id. Mi non sed dictumst elementum varius lacus scelerisque et pellentesque at enim et leo. Tortor etiam amet tellus aliquet nunc eros ultrices nunc a ipsum orci integer ipsum a mus. Orci est tellus diam nec faucibus. Sociis pellentesque velit eget convallis pretium morbi vel.

- Lorem ipsum dolor sit amet consectetur vel mi porttitor elementum

- Mauris aliquet faucibus iaculis dui vitae ullamco

- Posuere enim mi pharetra neque proin dic interdum id risus laoreet

- Amet blandit at sit id malesuada ut arcu molestie morbi

Sign up for reward programs

Eget aliquam vivamus congue nam quam dui in. Condimentum proin eu urna eget pellentesque tortor. Gravida pellentesque dignissim nisi mollis magna venenatis adipiscing natoque urna tincidunt eleifend id. Sociis arcu viverra velit ut quam libero ultricies facilisis duis. Montes suscipit ut suscipit quam erat nunc mauris nunc enim. Vel et morbi ornare ullamcorper imperdiet.

What Your "Enterprise" AI License Doesn't Actually Protect

You signed the enterprise agreement. Legal reviewed it. IT approved the vendor. The procurement process took three months. And now your organization is using AI at scale with confidence that the "enterprise tier" means you're covered.

You're not.

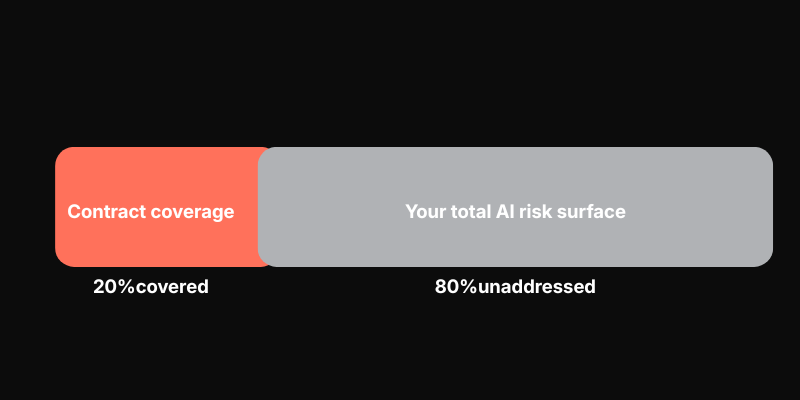

Enterprise AI agreements solve for a specific, narrow problem: ensuring the vendor doesn't use your data to train their models. That's real protection, and it matters. But it addresses maybe 20% of your actual risk surface. The breaches, shadow usage, regulatory exposure, and credential theft live entirely outside that contract.

The contract you signed covers maybe 20% of your actual risk surface. There’s the other 80% and what real protection looks like.

Third-Party Breach Exposure Severity: Critical

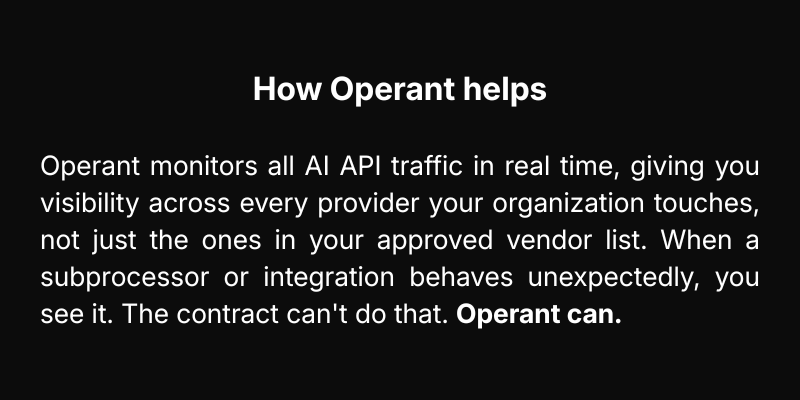

Selecting an enterprise-grade vendor feels like selecting a secure environment. That's a reasonable assumption — and how these agreements are often presented. But major AI providers operate through extensive networks of subprocessors, and your contract only governs one of those relationships.

In November 2025, a breach at a third-party vendor in OpenAI's supply chain exposed business customer names, email addresses, and API details without touching OpenAI's own systems. Most affected customers had never heard of the company that was breached. (Source: multiple security researchers and press reports, November 2025.)

Your security posture is only as strong as the weakest link in your vendor's supply chain, not just your vendor itself. The contract covers one relationship. The risk spans dozens.

Data Residency and Cross-Border Transfer Severity: Critical

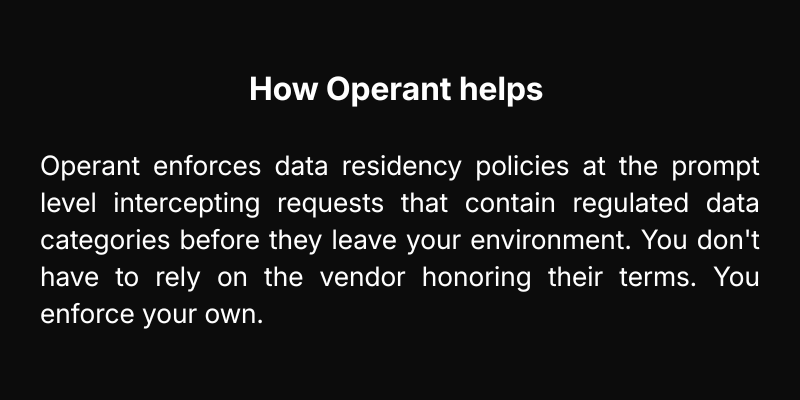

Your team spent months ensuring your AI deployment complied with GDPR, HIPAA, and your data residency requirements. You selected a regional deployment. You documented it. You got sign-off.

Then, quietly, an update to its terms. Buried in the update: fine-tuning operations may involve the temporary relocation of data outside your selected region. Not permanently, just long enough to be a problem. For healthcare organizations, this could mean patient data crossing borders that aren't permitted to cross. For any GDPR-covered entity, it potentially triggers a retroactive Data Protection Impact Assessment for a deployment that was already approved.

Vendors update terms as their infrastructure evolves. The burden of catching those updates and reassessing when they happen sits with your organization.

Shadow AI and Ungoverned Prompts Severity: Critical

Here's a scenario playing out right now in your organization: a business development manager is preparing for a major acquisition. The deal is under NDA. The financial models are confidential. The target company's identity is not public. She opens ChatGPT in her personal account, not your enterprise deployment, and pastes in a deal summary to help draft talking points. She's not trying to cause an incident. She just needed to get something done quickly.

This is happening constantly. A 2024 survey found that 90% of security leaders using AI cited shadow AI as their fastest-growing concern. The enterprise license you purchased governs the tools you approved. It has zero visibility into everything else. Development teams paste proprietary code into Copilot to debug faster. Finance uploads earnings models for formatting help. HR summarizes employee performance data to speed up reviews. Each of these is a data exfiltration event, even if no one involved would describe it that way.

Prompt Injection via Agentic Workflows Severity: Critical

Traditional AI has a natural safety property: a human reviews every output before anything happens. Agentic AI removes that step. These systems browse, query databases, execute code, and send communications on behalf of users without human review at each step.

In 2025, researchers demonstrated a real attack against ChatGPT's Deep Research: an attacker embedded a malicious instruction inside an HTML email. When Deep Research processed that email, it silently executed the instruction, exfiltrating sensitive data without any user interaction and without triggering any visible alert. Zero clicks. Zero warnings. Data gone.

Your enterprise agreement has nothing to say about how the model handles malicious inputs embedded in the data it processes. Agentic systems are uniquely vulnerable because they take action, not just generate text, based on external content they consume.

Intellectual Property Leakage Severity: High

When a developer pastes proprietary source code into an AI coding assistant to debug a function, that code is transmitted to a third-party server. It is processed there. It may be logged there. The "no training" clause means the model won't learn from your code; it doesn't mean your code doesn't exist on that server, or that it isn't captured in a supply chain breach.

Now multiply that one developer by your entire engineering organization. Add the product manager's pasting roadmap documents for summaries. Add the executive’s drafting board presentations from strategy decks. Add the lawyers asking for contract redlines on live deals. Every one of those interactions is intellectual property transiting infrastructure you don't control, under terms you agreed to once and probably haven't reviewed since.

The volume of IP transiting external AI infrastructure in most organizations is significant and largely unmeasured.

The "No Training" Clause Isn't Enough Severity: High

The "no training" commitment is the flagship protection in every enterprise AI agreement, highlighted in every vendor sales deck, and contractually binding. But consider what it actually covers.

A "no training" clause means the model weights won't be updated using your data. That's it. It doesn't mean your data is deleted after the session. It doesn't mean it isn't logged. It doesn't mean it isn't accessible to vendor employees under certain circumstances. It doesn't mean it isn't at risk from the kind of supply chain breach described above.

A contract is a promise. Promises don't prevent security incidents. Audits prevent security incidents. Monitoring prevents security incidents. Real-time enforcement prevents security incidents. The enterprise agreement gives you none of those things.

Credential Theft at Scale Severity: High

In 2024, a single dark web marketplace listing offered 225,000+ stolen OpenAI credentials for sale. Enterprise customers were almost certainly in the set. API keys and service account credentials don't expire by default, and they grant persistent, authenticated access not just to AI systems, but to everything those systems can reach through agentic integrations.

Your enterprise agreement doesn't monitor for credential misuse. It doesn't alert you when an API key is accessed from an unusual location or at an unusual volume. In an agentic workflow, a compromised key isn't a minor incident. It's a persistent, autonomous attacker operating inside your systems with legitimate credentials.

Enterprise agreements don't include credential monitoring. That responsibility requires operational controls, not contractual ones.

Regulatory Fines and EU AI Act Liability Severity: Medium–High

In April 2024, Italy's data protection authority issued a €15 million fine against OpenAI for GDPR violations related to how ChatGPT processed personal data. OpenAI paid the fine. Your organization wasn't involved, but the legal theory behind it applies directly to you.

When your employees submit data containing personal information to an AI system, your organization is a data controller or data processor under GDPR. The vendor's enterprise tier doesn't transfer that liability. The EU AI Act, now entering enforcement, adds a second layer: organizations deploying AI in high-risk categories, such as HR, credit, healthcare, and law enforcement, face mandatory conformity assessments and human oversight obligations that belong to the deploying organization, not the provider. When regulators investigate, they investigate the organization whose employees used the tool. The vendor's enterprise agreement is not a defense.

The Gap No One Talks About

Every security team reading this has strong controls on known data channels. You know what's leaving through email. You know what's hitting your DLP policies. You can query your SIEM for unusual file transfers.

Now ask yourself: do you have the same visibility into what's being sent to external AI APIs right now? Which employees are submitting data to which AI providers? What's in those prompts? Are any of them containing PII? Source code? Financial data? Is anyone in your organization using AI tools you never approved? Are any of your agentic workflows being manipulated by prompt injection attacks in real time?

For most organizations, the answer is: no visibility at all. That's the gap. And it's where real incidents happen.

Operant Closes The Gap

Operant shows you what's actually happening in real time, across every AI tool your organization uses, approved or not. It enforces your data policies at the prompt level, blocks prompt injection attacks before agentic systems act on them, and surfaces shadow AI that never appeared in your vendor inventory. Every action is logged and auditable, so compliance reviews have evidence, not just assertions. For CISOs governing AI at scale, Operant is the control plane that enterprise agreements were never built to provide.

Sign up for a 7-day free trial to experience the power and simplicity of Operant’s robust security for yourself.

3%20%3D(Art)Kubed%20(16%20x%209%20in)%20(7)-p-1080.avif)