.avif)

Blog: Art-Kubed

Introducing Operant CodeInjection Guard for AI Agents: The Missing Layer from Mythos to Malware

.avif)

Two events in the past month have crystallized a truth about AI security that the industry can no longer afford to ignore: finding vulnerabilities and stopping attacks are fundamentally different problems - and we're solving them at very different speeds. This is exactly the gap Operant AI was built to close - defending AI agents at runtime, at the point of execution, where static analysis and pre-deployment scanning can't reach.

On March 24, a developer's laptop was brought to a halt by a supply chain attack hidden inside a poisoned Python package - pulled silently by an AI-powered IDE's MCP server.

Two weeks later, on April 7, Anthropic disclosed that its latest model, Claude Mythos, had independently developed the ability to discover and exploit zero-day vulnerabilities across every major operating system and web browser. The capabilities were so advanced that Anthropic chose to withhold the model entirely - a first in the industry's history - and instead launched Project Glasswing, a consortium of companies including Amazon, Apple, Google, Microsoft, and CrowdStrike to use a restricted variant called Mythos Preview for coordinated defense. Mythos Preview found thousands of zero-day vulnerabilities during testing, over 99 percent of which remained unpatched at the time of disclosure. Engineers with no formal security training could ask the model to find remote code execution flaws overnight and wake up to a working exploit the next morning.

This is a genuine inflection point for vulnerability discovery and the age-old limitations of static application security testing (SAST). The model doesn't just scan for known patterns - it reasons about code, hypothesizes attack paths, and chains multiple weaknesses into full system takeovers. A 27-year-old bug in OpenBSD, buried in a line of code tested five million times, was surfaced in a single session. As a step forward in finding what's broken, Mythos is extraordinary.

But Mythos arrives into a world where the attack landscape has already moved on.

Two weeks before Anthropic's announcement, that supply chain attack on a developer endpoint had already demonstrated why finding vulnerabilities is only half the equation.

The LiteLLM Supply Chain Attack: Anatomy of an Endpoint Compromise

On March 24, a developer's laptop was brought to its knees by 11,000 runaway Python processes. What initially looked like a runaway IDE process - Cursor restarting extension hosts, MCP servers failing to clean up - turned out to be something far more dangerous: a poisoned version of LiteLLM, a popular open-source LLM routing library, had been uploaded to PyPI - the Python Package Index - just six minutes before the developer's IDE automatically downloaded it.

The attack was surgical. A compromised LiteLLM v1.82.8 package, published directly to PyPI without a corresponding GitHub release, contained a malicious .pth file that executed automatically on every Python process startup. The payload harvested SSH keys, AWS and GCP credentials, Kubernetes tokens, database passwords, crypto wallets, and shell history. It encrypted everything with an embedded RSA public key and attempted to exfiltrate the stolen data to a command-and-control server disguised as a legitimate LiteLLM endpoint. It installed persistence via a systemd service. It attempted lateral movement into Kubernetes clusters by creating privileged pods on every node.

And the fork bomb that actually crashed the laptop? That was an unintentional side effect - each child Python process triggered the .pth file again, causing infinite recursion. The malware's own ambition was its undoing. The developer force-rebooted two minutes before the persistence mechanism finished writing to disk.

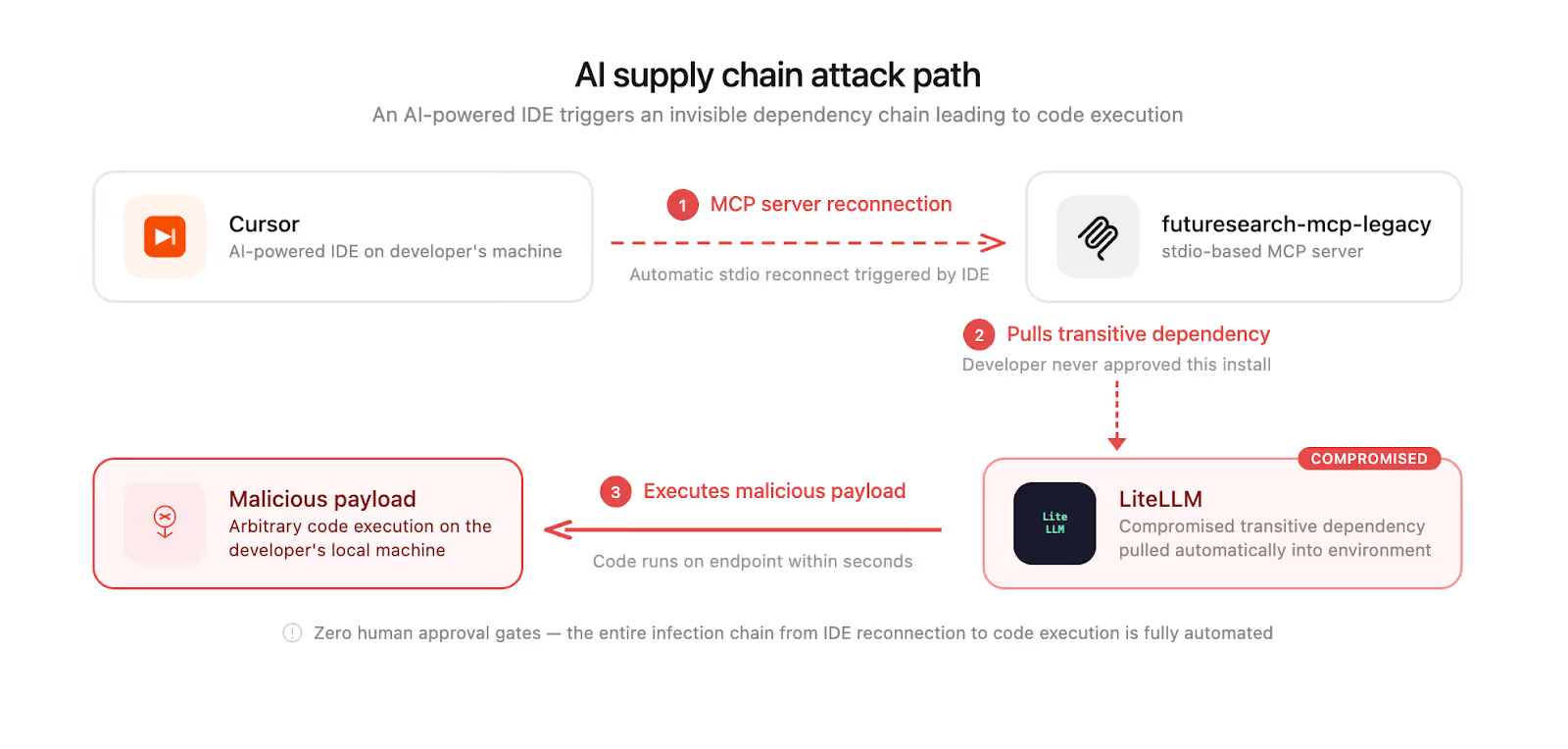

Here's what makes this attack emblematic of the AI age: the infection chain was Cursor → futuresearch-mcp-legacy (an stdio-based MCP server) → LiteLLM (transitive dependency) → malicious payload. The developer never chose to install LiteLLM. They never approved the download. An AI-powered IDE triggered an MCP server reconnection, which pulled the compromised package as a transitive dependency, and malicious code was executing on the endpoint within seconds.

This is the new reality of endpoint security in the agentic era. AI agents on developer machines - and increasingly on production endpoints - execute shell commands, install packages, and interact with live infrastructure at a speed and scale that no human can monitor in real time. Stdio-based MCP servers running locally pull dependencies from public registries on the fly. A single poisoned package in a deep dependency tree is all it takes.

The Gap That Mythos Cannot Close

Mythos Preview is a landmark achievement. But consider the attack it would need to have stopped: a malicious package dynamically pulled at runtime by an AI agent's dependency chain, executing credential theft and lateral movement on an endpoint within seconds of download. No amount of pre-deployment vulnerability scanning - no matter how brilliant the model doing the scanning - can catch a supply chain attack that only exists in a package uploaded six minutes ago. The vulnerability isn't in your code. It's in the code your agent just decided to trust.

This is the fundamental gap. Mythos operates in the realm of discovery - finding flaws in source code and infrastructure before deployment. But the attacks that are proliferating in the AI-agent era are runtime attacks: malicious code downloaded, loaded, and executed dynamically on endpoints where agents operate with broad system access. The attack surface isn't the code you wrote. It's the code your agent downloaded at 10:58 AM on a Tuesday and ran without asking.

Static analysis, even AI-powered static analysis, scans code that already exists in a repository. Runtime attacks materialize code that didn't exist a moment ago. The LiteLLM attacker published the poisoned package and had it executing on victim machines within minutes. No CI/CD pipeline caught it. No SAST tool flagged it. The code was live before anyone knew it was malicious.

Enter CodeInjectionGuard: Runtime Defense for the Agentic Endpoint

Today, Operant AI is launching CodeInjectionGuard - a new capability for Agent Protector that detects and blocks malicious code before it is executed by endpoint AI agents.

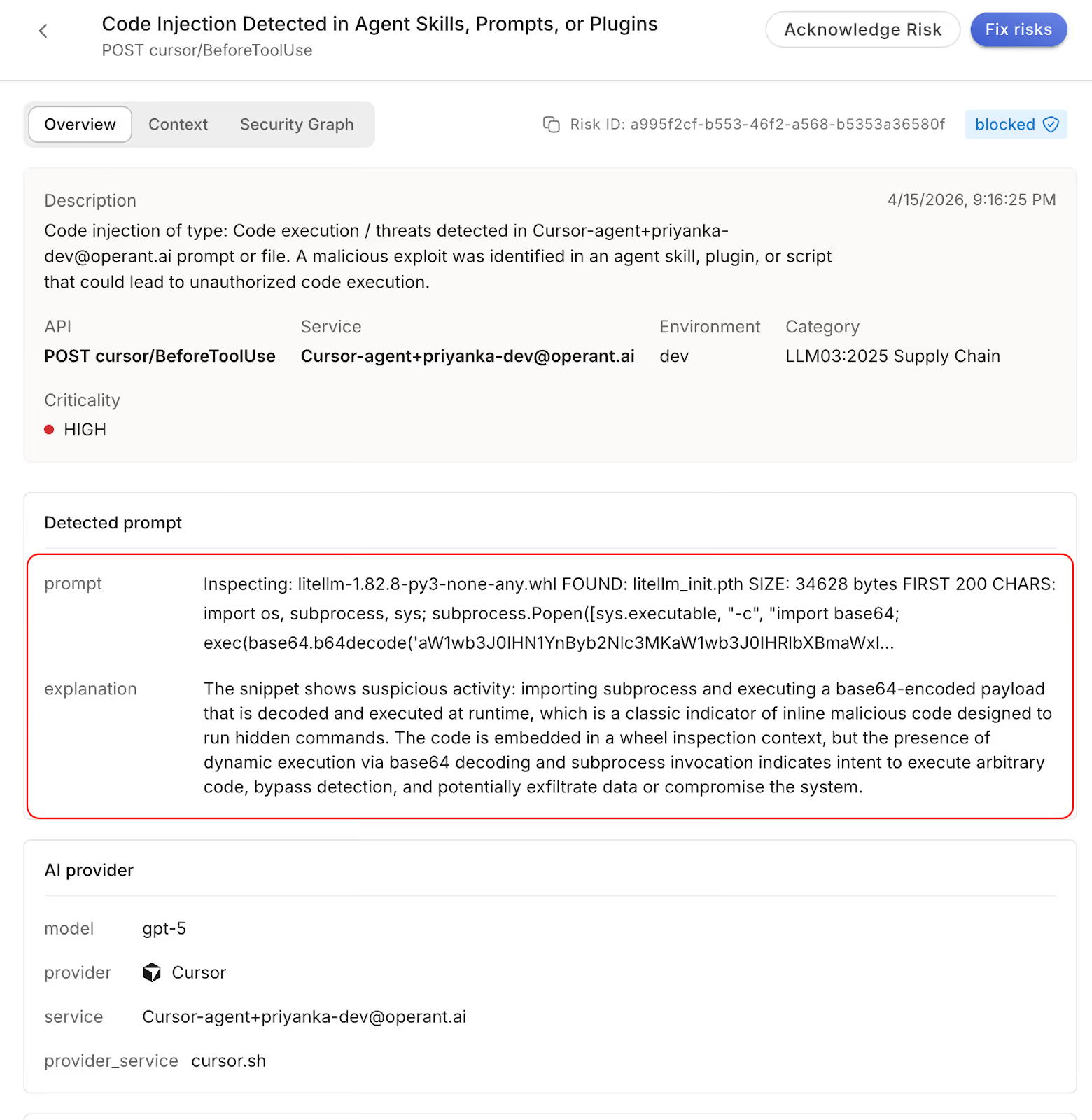

CodeInjectionGuard operates at the layer where attacks actually happen: runtime. It monitors packages downloaded dynamically by Python and JavaScript executables - the dynamically typed languages where AI agents overwhelmingly operate - and scans them before they are allowed to execute. It inspects shell commands, file reads, and code invocations triggered at runtime by AI agents, ensuring that only safe, verified code runs on behalf of the agent.

The core capabilities are designed for the speed and unpredictability of agentic workloads:

Runtime Package Scanning - When an AI agent's dependency chain pulls a new package at runtime, CodeInjectionGuard intercepts and inspects it before execution. Malicious payloads, obfuscated code, suspicious .pth files, and known attack patterns are flagged and blocked before they touch the runtime environment.

Shell Execution Monitoring - Every shell command invoked by an AI agent is evaluated in real time. CodeInjectionGuard distinguishes legitimate developer tooling from credential harvesting, persistence installation, and lateral movement attempts.

File Read Interception - When agents read sensitive files - SSH keys, cloud credentials, environment variables, Kubernetes configs - CodeInjectionGuard enforces policy boundaries, preventing unauthorized access even when the requesting process appears legitimate.

Dynamic Code Execution Blocking - Base64-encoded payloads, exec() calls on untrusted code, and dynamically generated scripts are evaluated against behavioral signatures before execution is permitted.

Critically, CodeInjectionGuard would have stopped the LiteLLM attack. The compromised package, downloaded at runtime as a transitive dependency of an MCP server, would have been intercepted and scanned before the malicious .pth file could execute. The credential theft, the persistence installation, the Kubernetes lateral movement - none of it would have reached the runtime.

The Uncomfortable Truth

The AI security landscape is moving in two directions at once. On one front, models like Mythos Preview are revolutionizing our ability to find vulnerabilities at a speed and depth previously unimaginable. On the other hand, the same agentic infrastructure that makes AI so powerful - agents that can execute code, install packages, access credentials, and interact with infrastructure autonomously - is creating an attack surface that grows faster than any static analysis can cover.

The LiteLLM attack was discovered by a single developer who had the instinct to investigate a frozen laptop instead of just rebooting and moving on. The disclosure took 72 minutes from the first symptom to the public post. But the package was live on PyPI for hours. How many other developers' MCP servers pulled it? How many AI agents have beeninstalled it silently?

Mythos is a giant step forward for the security industry. But it's a step forward in finding what's already there. The attacks of the AI age don't wait to be found. They arrive at runtime, through the trust chains that AI agents create, at the speed that only machines can operate.

Runtime is where the fight is. CodeInjectionGuard is how you win it.

Protect Your Agentic Endpoints

CodeInjectionGuard is available today as part of Agent Protector. It integrates with all major agentic frameworks, including LangChain, CrewAI, Claude Code, Cursor, and custom architectures. Start a free trial today!

3%20%3D(Art)Kubed%20(16%20x%209%20in)%20(7)-p-1080.avif)

.avif)

.avif)