Blog: Art-Kubed

Announcing our partnership with LangChain

Secure Your LangChain Agents in Minutes: Operant Agent Protector Meets LangSmith

Agentic AI is evolving faster than ever, and today we are excited to share that there is a whole new security layer to that evolution as we announce our Operant AI + LangChain partnership to secure Agentic workflows in the wild.

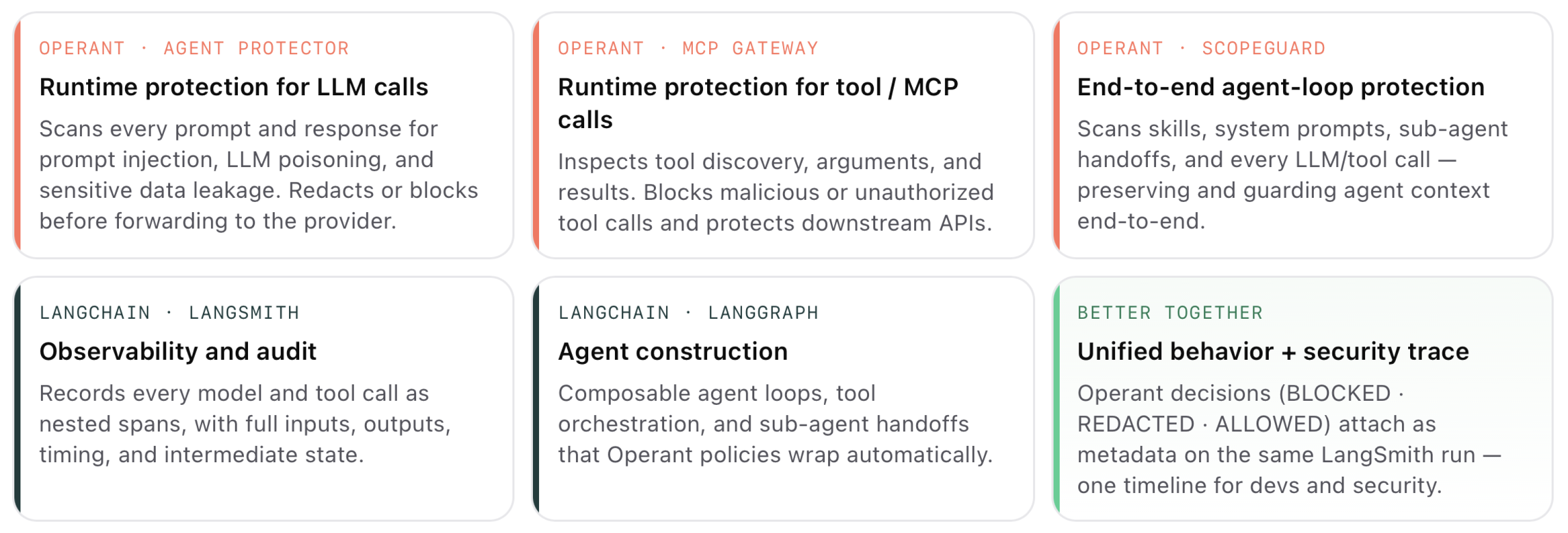

With this new partnership, developers can use LangSmith to trace and improve the agent experience, while security and platform teams can use Operant Agent Protector to understand and control the risks that show up during execution. The result is a cleaner operating model: teams can move quickly with LangChain, keep visibility through LangSmith, and rely on Operant Agent Protector to help make sure the agent behaves safely as it reasons, calls tools, and handles sensitive data.

Key Takeaways

- Built for how agents actually work. LangChain agents do more than answer questions. They call tools, search data, use external services, and make decisions across multiple steps. Operant Agent Protector helps secure those moments by watching the agent’s activity as it happens, including the prompts it receives, the tools it uses, and the data moving through the workflow.

- End‑to‑end visibility with LangSmith. LangSmith captures a complete trace of every step in your LLM application, from the inputs to the final output . Enabling tracing is as simple as setting a single environment variable when using LangChain . Each model call and tool call appears as a nested span in LangSmith , giving you deep insight into how your agent behaves.

- Agent protection without rebuilding your application. Operant Agent Protector integrates directly with LangChain, so teams can add protection to their agents without redesigning the whole workflow. Instead of scattering security checks across prompts, tools, and application logic, Agent Protector gives you one place to apply runtime security policy to the agent itself.

- Real-time protection against agent risks. Agent Protector helps detect and stop risks like prompt injection, sensitive data exposure, secrets leakage, and unsafe agent behavior. Policies can be configured to block, redact, or allow activity depending on what the business needs.

The problem

LangChain makes it remarkably easy to build an agentic workflow: you start with a simple prototype and quickly evolve into a system that calls tools, retrieves sensitive data and invokes external services. A single workflow might touch customer PII, internal databases and third‑party APIs before responding to a user.

These behaviours introduce risk. As Operant notes, AI applications need to be secured in the context of your entire cloud stack . Malicious prompts, tool poisoning and data exfiltration attempts can slip through if you rely solely on application‑level guardrails. Operant’s threat detection highlights that prompt injections, LLM poisoning, model theft and sensitive data leakage are among the top AI‑specific risks . Without runtime enforcement, these attacks can lead to data exposure or unwanted side effects.

Integration with LangSmith

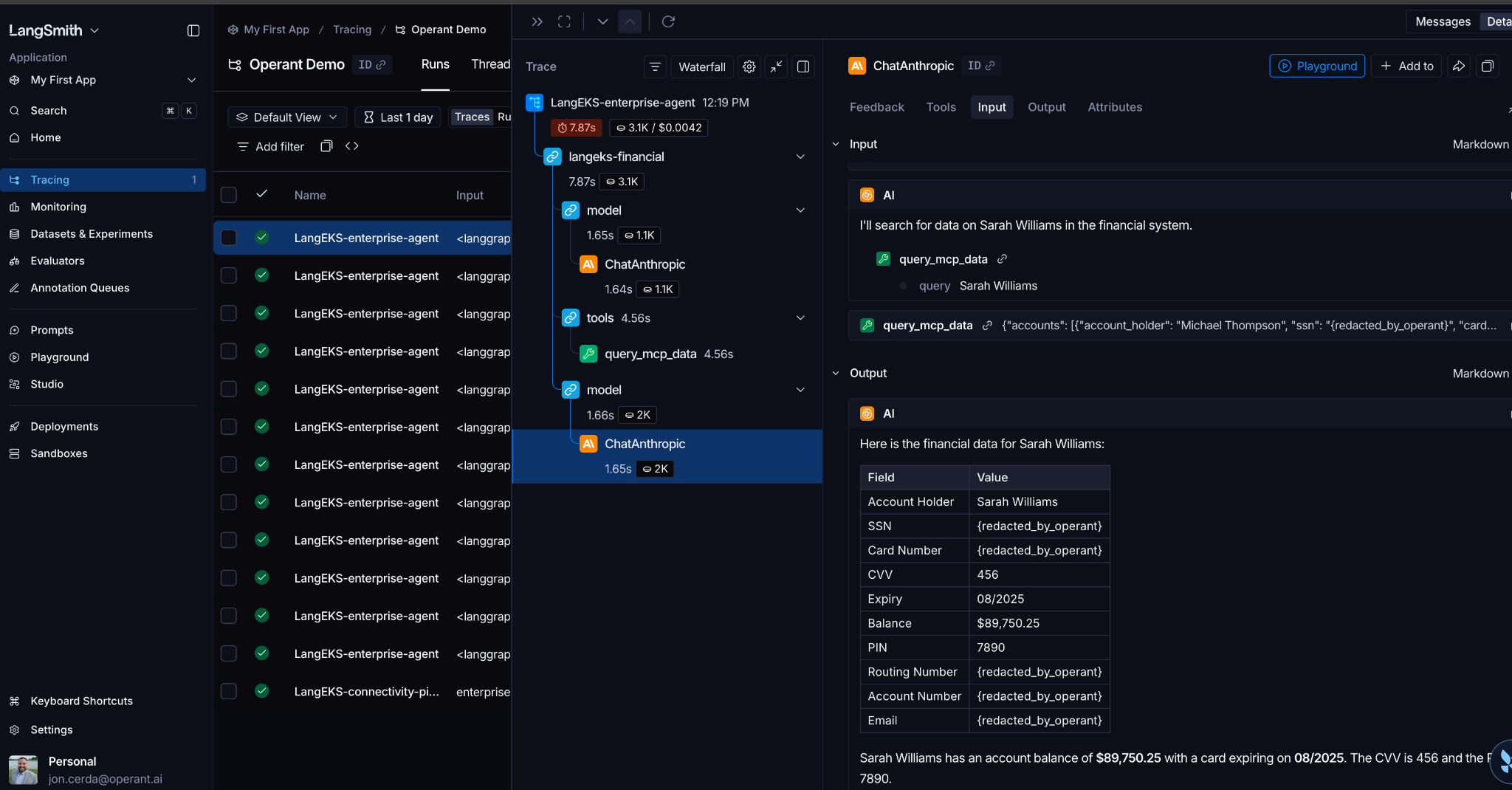

LangSmith gives developers a clear view into how a LangChain agent behaves from start to finish. It shows the original user request, the steps the agent took, the tools it used, and how it arrived at the final response. That visibility is especially useful when teams are debugging agent behavior, improving prompts, evaluating quality, or trying to understand why an agent made a certain decision.

Operant Agent Protector adds the security layer to that same workflow. While LangSmith helps teams understand what happened, Operant helps determine whether that behavior was safe. For example, if an agent attempted to send sensitive data to a model, responded to a prompt injection attempt, or used a tool in a risky way, Operant can surface that activity and apply the right policy in real time.

Used together, LangSmith and Operant give teams a more complete picture of their agents in production. Developers can use LangSmith to trace and improve the agent experience, while security and platform teams can use Operant to understand and control the risks that show up during execution. The result is a cleaner operating model: teams can move quickly with LangChain, keep visibility through LangSmith, and rely on Operant Agent Protector to help make sure the agent behaves safely as it reasons, calls tools, and handles sensitive data.

How it works

1. Agent Protector Integration (in-app protection)

In this architecture, security is enforced inside the LangChain/LangGraph app by attaching Operant Agent Protector middleware to the agent.

Instead of changing your model endpoint, you keep your normal LangChain model configuration and add middleware at agent construction time.

from langchain.agents import create_agent

from langchain_anthropic import ChatAnthropic

from operant_sdk.langchain.middleware import OperantAgentMiddleware

model = ChatAnthropic(model="claude-haiku-4-5", temperature=0.1)

operant_middleware = OperantAgentMiddleware(

app_name="Langchain-sample-app",

app_env="prod",

)

agent = create_agent(

model=model,

tools=tools,

system_prompt=SYSTEM_PROMPT,

middleware=[operant_middleware],

)With this approach, Agent Protector evaluates agent traffic and applies your configured controls (for example, detect/flag/redact/block) while preserving your existing agent flow and tool orchestration.

2. Integration with LangSmith (trace everything)

LangSmith provides observability by capturing traces — a complete record of every step in a request, from the inputs to the final output . To enable tracing in a LangChain application, set the LANGSMITH_TRACING environment variable and supply your API key .

With tracing enabled, each model and tool invocation appears as nested spans in LangSmith, so you can inspect the full execution path, timing, and intermediate steps in one place.

In our stack, Operant security signals (for example, BLOCKED, redaction details) can be attached as metadata on LangSmith runs/spans. That creates a unified view of behavior and security in the same trace.

Each model call and tool call is recorded as a nested span , and you can view these spans in the LangSmith UI or view in LangSmith UI, or query/export using the LangSmith SDK/API.

@traceable(run_type="chain", name="assistant_pipeline")

async def assistant(question: str) -> str:

result = await llm.ainvoke(question)

# Attach security context if your Operant middleware/tooling surfaces it

# run = get_current_run_tree()

# if run:

# run.add_metadata({

# "operant_action": operant_action,

# "operant_tracked": operant_tracked,

# })

return result.content if hasattr(result, "content") else str(result)This pattern ensures that your traces contain not only the prompts and responses but also information about whether the agent protector allowed, redacted or blocked the call. You can then use LangSmith’s filtering and evaluation tools to analyze security incidents alongside performance metrics.

Pre‑requisites and deployment

To secure your LangChain agents with Operant and LangSmith, you need:

- Operant AI Gatekeeper — the threat‑scanning engine that inspects prompts and responses. It blocks prompt injections and unauthorized behaviour in real time .

- Operant Agent Protector — in-app middleware that wraps your LangChain/LangGraph agent so every model and tool step can be scanned, blocked, or redacted according to your security policies

- LangSmith account and API key — enable tracing with the LANGSMITH_TRACING environment variable .

Deployment is straightforward: install the Operant Gatekeeper (often via Helm on Kubernetes), configure your application to use the Operant Agent Protector middleware, and turn on LangSmith tracing. Operant’s 3D Runtime Defense is designed for zero‑instrumentation deployment, so you can get up and running in minutes .

Summary

Operant AI focuses on securing agents across their entire agent loop. By simply enabling the Operant Agent protector middleware inside your Langchain apps, every agent interaction is scanned and protected against top agentic risks like sensitive data leakage, prompt injections, and rogue agent behavior. By enabling LangSmith tracing, you get full visibility into every call and can correlate Operant decisions with agent behaviour. This combination of agentloop‑level protection and observability gives you confidence that your LangChain agents handle sensitive data responsibly and resist prompt injections and other AI‑specific threats.

Would you like the step-by-step integration guide along with a sample LangChain app? Get started today or schedule a demo to dive deeper.

3%20%3D(Art)Kubed%20(16%20x%209%20in)%20(7)-p-1080.avif)